Analytics Blog

Can You Trust Your Google Analytics Data?

Your data in Google Analytics may not be as accurate as you think. If you have a high volume of visits, your data could easily be off by 10-80%, or even more. Shocking right?

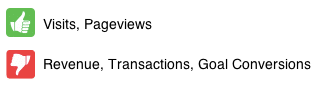

It is our fear that people aren’t aware of this and could be making data-driven decisions on potentially inaccurate data. So what data can you trust? Well, the short answer is that you can trust data such as visits and pageviews, but you can’t rely on revenues, transactions, goal conversions, and conversion rates.

In this post, we will do a deep dive into the world of sampled Google Analytics data and helping you understand at what point you should trust the data (or not).

Reasons for Inaccurate Data

One reason for inaccurate data is your implementation; we’ve focused on that topic in previous blog posts and we also offer consulting services to expertly address those issues.

Another reason, which is outside of your control in Google Analytics Standard, is the amount of data you have and your probability to receive sampled data in the Google Analytics reporting interface (or even via the API). We’ll be focusing on the latter.

How Does Sampling Work in Google Analytics?

The majority of the Standard reports you find in Google Analytics are not sampled. They’ve been pre-aggregated by Google’s processing servers and no matter your date range you’ll be looking at unsampled data. There are though a number of triggers that cause sampled data in GA.

The primary reason for sampled data is that your selected date range has more than 500k visits and you are either running a report which is not pre-aggregated and/or you are applying an advanced segment (default or custom). It is very helpful, prior to reading the remainder of this post, to read the details about how sampling works in Google Analytics.

To be clear, we are not talking about data collection sampling via _setSampleRate (so ignore that in your reading at the bottom of the sampling article referenced). Data collection sampling is a very straightforward concept in which you are electing to only send a specific percentage of data to GA. In this post, we are talking about the automatic sampling of data in Google Analytics, which exists in both GA Premium and GA Standard (the difference being the availability to run an unsampled query and download the data in Google Analytics Premium).

Sampling Slider

To avoid unnecessary sampling in the interface, make sure you are aware of the sampling slider, and only making decisions based on ‘higher precision’ data.

Where is the Sampling Slider? When sampling occurs, you will see the checkerboard button appear (indicated by the hand cursor in the image to the right) and when clicked it will display the sampling slider (as highlighted in blue to the right).

How do you use the Sampling Slider? You move the slider between the Faster Processing and Higher Precision Settings. In the examples, we provided we use two specific slider settings:

- 50% Slider Setting (default setting) – Balance between ‘Faster Processing’ and ‘Higher Precision’ which is the default Google Analytics setting. Data is often under or over reported by 80% or more using this setting. While it is nice to move around the interface faster, we don’t recommend this setting or anything further to the left of 50%.

- 100% Slider Setting (Highest Precision) – The farthest right setting for ‘Higher Precision’ (same as shown in the screenshot above), which requires a manual override by the user to move the slider to the far right. Data is usually within 10% of actual value. Keep in mind that this setting is ‘Higher‘ not the ‘Highest‘ precision, and does not always yield more accurate data than the 50% default slider setting.

The Problem

When you have a high volume of visits, the quality of your analysis can be hampered by sampled data. Your notification that the data you are looking at is sampled is shown below and will appear at the top right of the report:

![]()

When you see this notification, you are presented with two facts about this sampling:

- The report you are looking at is based on X visits and that is Y percentage of total visits.

- The percentage of total visits that your segment represents (if you don’t have a segment applied, this is 100% of your total visits).

So, what is missing here?

It is great that Google gives you this information, but the data point missing is what is the accuracy level of the data (at the report’s aggregate level as well as at the row level). Long ago, Google used to show a +/- percentage next to each row of data; unfortunately this important piece of data was removed a while back. Without this data, we fear that people are making data-driven decisions on potentially inaccurate data.

How Accurate is Sampled Data?

To answer this question, we analyzed data across various dimensions and metrics with a variety of sample sizes and then compared it to unsampled data obtained from Google Analytics Premium.

One advantage of Google Analytics Premium is that when you see the sampling notification bar, you can simply request the report you are looking at to be delivered to you as unsampled data. We’ve leveraged this feature in this post to deliver to you important insights about sampled data.

Our Approach

First, let’s review our approach:

- Our tests simulate actual real-world queries.

- We were interested in the following metrics: visits, transactions, revenue, and ecommerce conversion rate.

- We wanted to view the above metrics by two different data dimensions: source/medium and region/state (filtered for US only).

- We built two separate custom reports, one for each data dimension noted above, and then the metrics that matter to us.

- A different date range was used for each custom report in order to get different sample sizes, since sample size likely impacts data quality.

- Four tests were ran for each custom report:

- No segment applied sampled data with the sampling slider bar at 50% and another query at a 100% sampling slider bar (this data is sampled due to the dimension/metric combination we selected since it is not pre-aggregated by Google Analytics) versus unsampled data.

- New Visitors segment applied data with the sampling slider bar at 50% and another query at a 100% sampling slider bar versus unsampled data.

- Mobile Traffic segment applied data with the sampling slider bar at 50% and another query at a 100% sampling slider bar versus unsampled data.

- Android Traffic (custom segment matching OS = ‘Android’) segment applied data with the sampling slider bar at 50% and another query at a 100% sampling slider bar versus unsampled data.

- Unsampled data was obtained using Google Analytics Premium (not a feature available for Google Analytics Standard).

- Since we did this for two custom reports, we ended up having 24 total data queries (3 queries for each test * 4 tests per custom report * 2 custom reports).

The table below summarizes what we know about the sampled data, prior to comparing it to the unsampled Google Analytics Premium data:

As you can see above, the sample sizes are consistent across the various sampled data for each of our two reports. This makes sense as we are using the same date range and just selecting a different segment and sampling bar position.

The important thing to note before we move on is that in the order the segments appear above, the % of total visits that the segment represents decreases from 100% (for no segment) all the way down to 4.54% (for the Android segment). In between, we captured a data point at 56% and 14%.

The Results

We performed three separate data quality analyses. First, we’ll look at the overall metric accuracy across all data in the report. Then after that, we’ll look at two subsets of data (individual row accuracy and top 10 row accuracy). The percentages shown throughout this analysis are variances as compared to to the unsampled data.

Data Quality Analysis #1 – Overall Metric Accuracy

For the Source/Medium data dimension query, the below table contains the results.

Let’s review the results of the Source/Medium query:

Visits:

- The visits metric is quite reliable across all samples and sampling slider bar settings (keep in mind we are looking at the overall metrics and not individual rows just yet).

- The largest variation was -1.46%. If we were dealing with an unsampled value of 1,000,000 visits, then this variation would yield 985,400 visits. Not terrible by any means.

- Increasing the sampling slider bar to 100% (500k visits) did not always yield more accurate data for the visits metric.

Transactions:

- Accuracy ranged from -0.92% (quite good) to -11.86% (now we are getting into unreliable data).

- 1,000 transactions (unsampled) at a -11.86 variation results in 881 transactions in sampled data (119 missing transactions).

Revenue:

- The largest variance here was -16.09% and also had a +11.37% in the Android segment. It is important to note that sampled data may be under or over reported.

- At a -16.09% variance, $500,000 becomes a sampled value of $419,550. $80,000 unaccounted for in this example is a big problem.

Ecommerce Conversion Rate:

- Accuracy ranged from -1.21% to -12.47%

For the US Region (States) data dimension query, the below table contains the results.

Let’s review the results of the US Regions query:

Visits:

- The visits metric is reliable just as it was in our source/medium queries.

- The largest variation was +0.89% for our Android segment (4.54% of total visits) with the sampling slider set to 100%.

- Oddly enough, when the sampling slider was at 50% (250k visitors), the overall data was more accurate. We definitely cannot state that this would always be the case; in fact it statistically should be a rarer occurrence for less visits in a sampled set to yield more accurate data.

- At a +.89% variance, 1,000,000 visits becomes 1,008,900 (not bad!)

Transactions:

- At a +16.55% variance, 1,000 transactions becomes a sampled value of 1,166

Revenue:

- At a +14.74% variance, $500,000 becomes a sampled value of $573,700 (yikes!)

Ecommerce Conversion Rate:

- Accuracy ranged from -0.18% to +16.29%

For the Overall Metric Accuracy, we found that the visit metrics presented little concern. We know that the data won’t be accurate, so we can live with a peak variance of -1.46%. On the other hand, I start to get concerned with the transaction and conversion rate metric accuracy and then much more concerned with revenue. I believe the problem here is that Google Analytics uses a sample of visits to compute the data and of those that were included in the sample, only a few percent (relative to the ecommerce conversion rate) had a transaction and the revenue values will differ by quite a bit. You can see how the sampling becomes diluted. If you had an ecommerce site where everyone that transacted had the same revenue amount, then I would suspect that the revenue metric would not be off by as much.

Data Quality Analysis #2 – Top 10 Row Metric Accuracy

For the top 10 row analysis, I sorted the data by the metric being analyzed. The objective, as an example, being to show the accuracy of the top 10 revenue rows (which may not always be the top 10 visit rows).

For the Source/Medium data dimension query, the below table contains the results of the top 10 rows.

The results aren’t as accurate as the aggregate metrics. A surprising data point was that the ‘Android Traffic’ segment had a variance of a +4.77% on the overall metric accuracy, while the top 10 analysis resulted in a -2.44% variance.

For the US Region (States) data dimension query, the below table contains the results of the top 10 rows.

The results again, aren’t as accurate as the aggregate metric analysis.

Data Quality Analysis #3 – Individual Row Accuracy Highlights (within the top 10 rows)

For this analysis, I stayed within the top 10 rows of the metric being analyzed so that I would have more reliable data. I could have picked a row that had 1 transaction unsampled and 20 sampled transactions to show a large variance (there are many examples of these), but I assume we want to pick on more actionable data.

The visits metric was usually within +/- 6% for the top 10 rows, but when you get to a more narrower defined segment, there were some larger discrepancies:

- A source/medium of ‘msn / ppc’ had a +49.37% variance

- A region of District of Columbia (Washington DC) had a -20.20% variance

For the revenue metric, there were a few highlights (and too many weird variances to share):

- Top row #8 reported no revenue for a region when the sampling slider was at 50% and then when the slider was at 100%, it was a +7.94%. Again, this is a top revenue source.

- #1 source of revenue was off by -80.02% for a sampling slider at 50% and then at 100% (‘higher precision’ setting) it was off by -11.52%.

- #6 source of revenue was off by -608.25%, missing several thousand dollars of revenue

- #5 and #7 source of revenue was over reported by 56% and 47%

Frightening

The results of individual rows vary quite a lot and would make me worry about presenting these results of say paid search or even organic search in an accurate manner. For example, I found one of the top sources of revenue (google / organic) to be under-reporting by 31% when sampled. AdWords was under reporting by thousands of dollars in revenue and in one case, reporting $0 revenue and 0 transactions. That is frightening if you are using this data to make decisions and saying that there are no mobile visitors (as an example) that transact via paid search when there actually is!

If you get down to a very granular data row (for example a data row that is only 1 visit in unsampled data), then you will have wildly inaccurate data because you’ll be seeing the multiplier of the sampling algorithm. As an example, the data I analyzed contained 1 visit unsampled for a specific source/medium, but in sampled data it showed 23. Why would it show 23? Because 23 happens to be the multiplier. The random sample in GA data included this single visit and all data, including this row, in my sampled results were multiplied by 23. Did I have 23 visits for this specific source? Nope!

BONUS TIP: If you want to see what your sampling multiplier is, you can go to a report that has very granular dimensions such as the ‘All Traffic Source/Medium’ report and then sort ascending on the Visits metric. The smallest value for the Visits metric that you see is likely your multiplier. You could also manually calculate this by taking your known total visits in the date range, prior to any segmentation, and dividing by your sample size (500,000 visits for example). If your date range had 100,000,000 visits (prior to any segmentation or sampling) and you had your sampling slider at 500,000 visits (all the way to the right), then your multiplier would be 200.

Conclusions

In our tests, we found sampling in Google Analytics to deliver fairly accurate results for the visits metric. Google’s sampling algorithm samples traffic proportional to the traffic distribution across the date range and then picks random samples from each day to ensure uniform distribution. This method seems to work out quite well when you are sampling across metrics like visits and total pageviews (top-line metrics), but quickly starts to present concerns when only a subset of those visits qualify for a metric such as transactions or revenue. I would expect the same accuracy concerns with goal conversion rates and even bounce rates relative to a page dimension. Additionally, we’ve seen many issues when using a secondary dimension and sampling.

Be Cautious

When dealing with more granular metrics such as transactions, revenue, and conversion rates, I would be extra-cautious about making data-driven decisions from them when they are sampled. As your segment becomes more narrowly defined and you have a smaller percentage of total visits being used to calculate the sampled data, you accuracy will likely go down. In some cases, it could be accurate, but the point is that you won’t know for sure if the visits that mattered were included in the random sample lottery.

In addition, be cognizant of the sampling level and only make data driven decisions when the sampling slider is moved to “higher precision” (far right). In data quality analysis #3, this was the difference between under reporting revenues by 11% or 80%.

Your Options

We’ve just told you that your sampled data is bad and put some numbers behind it to explain how far off it might be. So, what can you do about it?

Option #1 – Go Premium

If you are already using Google Analytics Premium, then simply request the unsampled report via the ‘Export’ menu. If you are a Google Analytics Standard user, you could upgrade to Google Analytics Premium to get this feature. You can contact us to learn more about Google Analytics Premium features, cost, and what we can do for your business as an authorized reseller.

Option #2 – Secondary Tracker

Another, albeit creative, approach would be to implement a secondary tracker with a new web property (UA-#) in select areas (for example only on the checkout flow or receipt page). If you have less than 500k visits that go through this flow (during the date range you wish to analyze), then you’ll be able to get unsampled data with just the pages that you’ve tagged. Some metrics won’t be accurate since you are only capturing a subset of data. For example, time on site and pages/visit would both be inaccurate (only accurate within the constraints of what was tagged). This approach certainly isn’t right for everyone (also doesn’t scale) and implementing dual trackers can be tricky and could potentially even mess up your primary web property if you do it incorrectly. You can work with Blast to help you navigate whether this approach makes sense as well as the full list of drawbacks and advantages as it pertains to your business needs.

Option #3 – Export Data

Export data using short date ranges like 1-7 days, that avoid or limit the amount of sampling, and then aggregate the exported spreadsheets externally to analyze. As noted above, if you see the checkerboard button show up on the right side underneath the date selector, then you need to shorten your date range to avoid sampling. Be aware that you need to be careful about the metrics you aggregate. For example, you can’t aggregate bounce rate or conversion rate, but you can aggregate conversions and visits to calculate this metric. If you are interested in this approach, let us know since we have developed a tool, called Unsampler to easily download unsampled reports from Google Analytics.

Option #4 – Collect Clickstream Data

A third option would be to collect hit-level (aka clickstream) GA data and store those individual hits in your own data warehouse. At Blast, we have a tool that we developed, Clickstreamr (currently in limited beta), that collects this data and makes every GA hit available to a CSV file that you can consume however you wish (other formats or direct database insertion is possible). With this, your data is completely unsampled and you will need to have a data warehouse structure in place to handle this level of data and the ability to write queries against this data.

Phew, that was a long post. As always, post a comment below to ask any questions you may have.